Or, you can use one of the service discovery systems that Prometheus supports to have HAProxy servers added and removed dynamically. If you’re operating a cluster of load balancers, then each HAProxy server should have its own targets line. For this example, I’m running only a single instance of HAProxy, which is running on the same server as Prometheus. Once you have it, edit your prometheus.yml file so that it contains a job for HAProxy by adding the following to the scrape_configs section:īy default, Prometheus scrapes the path /metrics and since that is what we configured in HAProxy, nothing further needs to be changed. The documentation also explains how to run it as a Docker container and how to install it using a configuration management tool. This tells you how to run Prometheus as a standalone executable. To get started with Prometheus, follow the First Steps with Prometheus guide to getting up and running. Next, we’ll start Prometheus scraping the HAProxy metrics. You can also visit /stats to see the HAProxy Stats page. The commands look like this on an Ubuntu server: Compiling HAProxy for Prometheusįirst, you’ll need to compile HAProxy with the Prometheus Exporter. However, the data is extracted directly from the running HAProxy process. In fact, it exposes more than 150 unique metrics. The new HAProxy Prometheus exporter exposes all of the counters and gauges available from the Stats page.

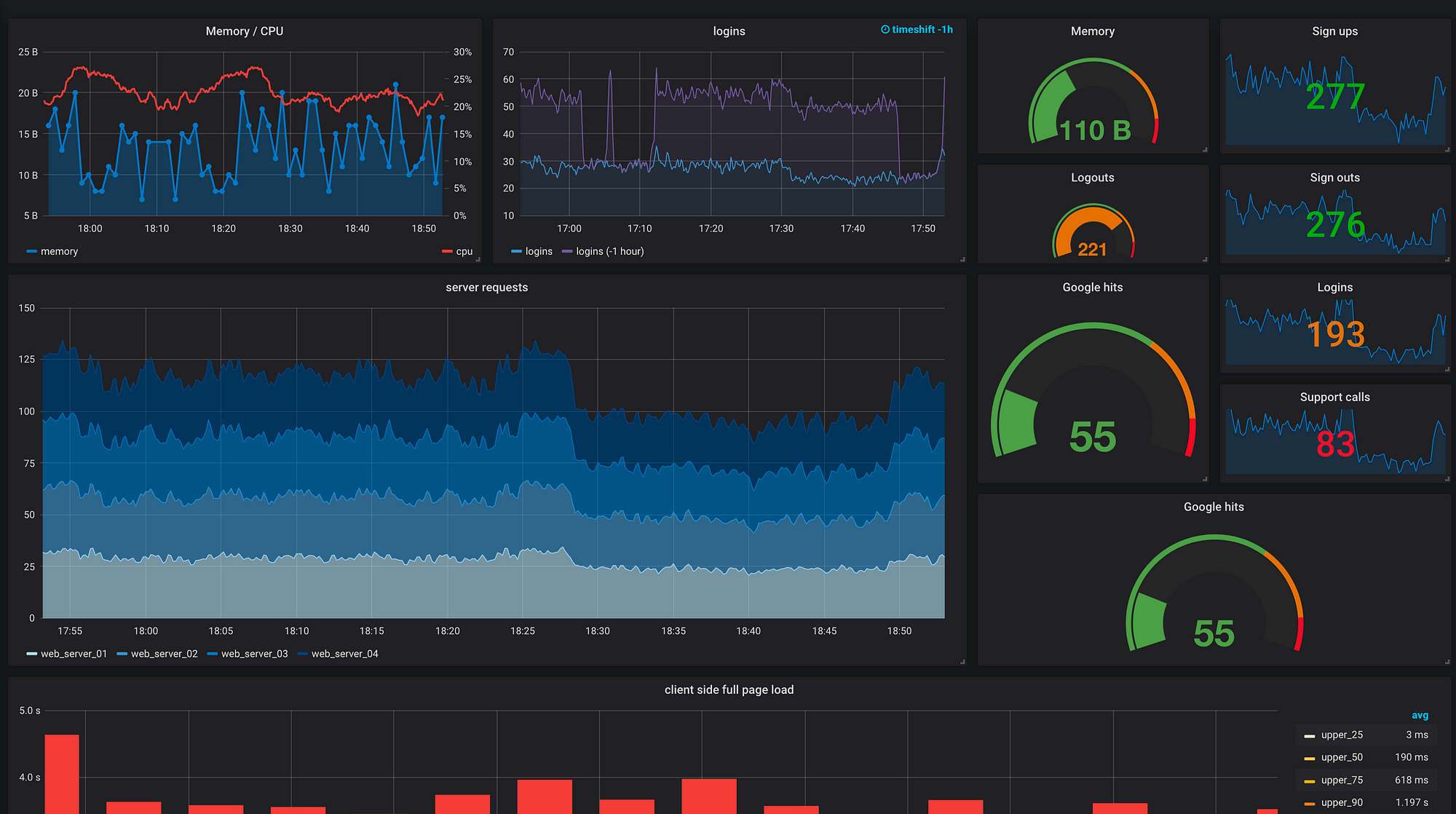

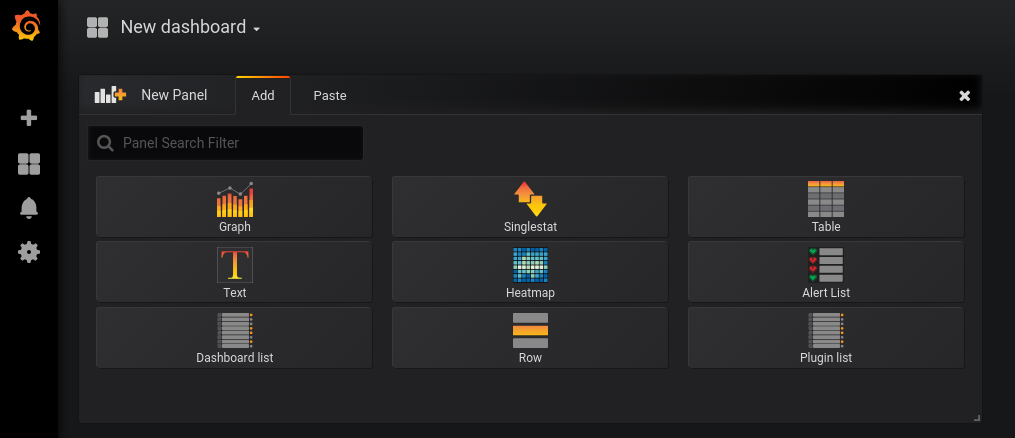

It also integrates nicely with graphing tools like Grafana and the alerting tool Alertmanager. Prometheus is especially helpful because it collects the metrics, stores them in its time-series database, and allows you to select and aggregate the data using its PromQL query language. Having this information on hand might even help you to quickly resolve that complaint at 2:27 a.m.! Graphing these statistics will help you identify when and where issues are happening and alerting will ensure that you’re notified when issues arise. With traffic flowing through HAProxy, it becomes a goldmine of information regarding everything from request rates and response times to cache hit ratios and server errors. In this blog post, we’ll explain how to set up the metrics endpoint, how to configure Prometheus to scrape it and offer some guidance on graphing the data and alerting on it. Enable the service in your HAProxy configuration file and you’ll be all set. Having Prometheus support built-in means that you don’t need to run an extra exporter process. HAProxy Enterprise users can begin using this feature today as it has been backported to version 1.9r1. The new module can be found in the HAProxy source code, under the contrib directory. Starting in version 2.0, you can compile HAProxy with native Prometheus support and expose a built-in Prometheus endpoint. In particular, many users have benefited from the HAProxy Exporter for Prometheus, which consumes the HAProxy Stats page and converts the data to the Prometheus time series. CSV is perhaps one of the easiest formats to parse and, as an effect, many monitoring tools utilize the Stats page to get near-real-time statistics from HAProxy. It can be consumed as a CSV-formatted feed-although you can also use the Runtime API to export the data as JSON. HAProxy currently provides exceptional visibility through its Stats page, which displays more than 100 metrics. Metrics give you essential feedback about how well, or unwell, things are going: Are customers using the new features? Did traffic rates drop after that last deployment? If there’s an error, how long has it been happening, and how many customers have likely been affected? They contain the data that inform you about the state of your systems, which in turn allows you to see patterns and make course corrections as needed.

Metrics are a key aspect of observability, along with logging and tracing.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed